I realise this is a known issue and that lemmy.world isn’t the only instance that does this. Also, I’m aware that there are other things affecting federation. But I’m seeing some things not federate, and can’t help thinking that things would be going smoother if all the output from the biggest lemmy instance wasn’t 50% spam.

Hopefully this doesn’t seem like I’m shit-stirring, or trying to make the Issue I’m interested in more important than other Issues. It’s something I mention occasionally, but it might be a bit abstract if you’re not the admin of another instance.

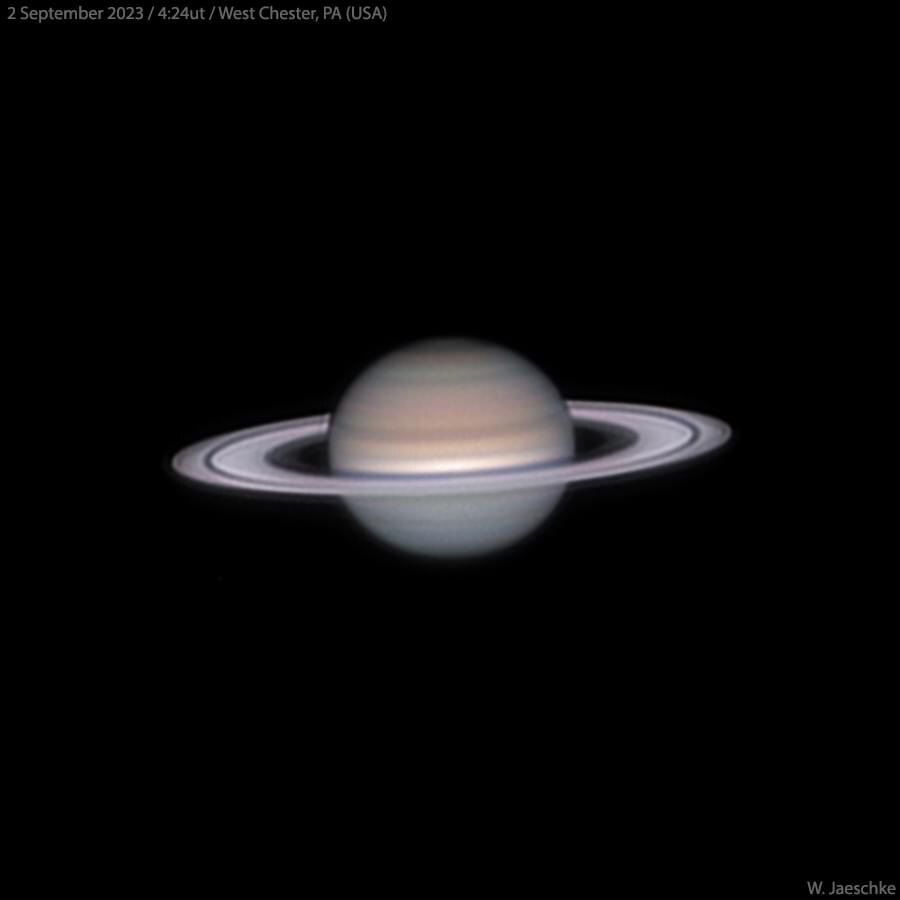

The red terminal is a tail -f of the nginx log on my server. The green terminal is outputting some details from the ActivityPub JSON containing the Announce. You should be able to see the correlation between the lines in the nginx log, and lines from the activity, and that everything is duplicated.

This was generated by me commenting on an old post, using content that spawns an answer from a couple of bots, and then me upvoting the response. (so CREATE, CREATE, LIKE, is being announced as CREATE, CREATE, CREATE, CREATE, LIKE, LIKE). If you scale that up to every activity by every user, you’ll appreciate that LW is creating a lot of work for anyone else in the Fediverse, just to filter out the duplicates.

If it’s reproducible, you should file a bug report with Lemmy itself.

I can’t re-produce anything, because I don’t run Lemmy on my server. It’s possible to infer that’s it’s related to the software (because LW didn’t do this when it was on 0.18.5). However, it’s not something that, for example, lemmy.ml does. An admin on LW matrix chat suggested that it’s likely a combination of instance configuration and software changes, but a bug report from me (who has no idea how LW is set up) wouldn’t be much use.

I’d gently suggest that, if LW admins think it’s a configuration problem, they should talk to other Lemmy admins, and if they think Lemmy itself plays a role, they should talk to the devs. I could be wrong, but this has been happening for a while now, and I don’t get the sense that anyone is talking to anyone about it.

You can still make a bug report, so that somebody with the actual means can look into it. If every action is duplicated that’d be pretty severe, if not, well it’ll be closed.

A bug report for software I don’t run, and so can’t reproduce would be closed anyway. I think ‘steps to reproduce’ is pretty much the first line in a bug report.

If I ran a server that used someone else’s software to allow users to download a file, and someone told me that every 2nd byte needed to be discarded, I like to think I’d investigate and contact the software vendors if required. I wouldn’t tell the user that it’s something they should be doing. I feel like I’m the user in this scenario.

Idk, it’s FOSS, so you could always try, but just complaining and hoping somebody picks up on it probably also works. I don’t judge :)

I’ve since relented, and filed a bug

<3

Oooooo you sassin

It seems its a misconfiguration problem when running multiple server processes, which mostly is only done by larger instances. So it’s not a bug in lemmy per se, but perhaps a bug in lemmy.worlds configuration.

Are you able to include the HTTP Method being called and the amount of data transferred per request? It’s possible that the first request is an OPTION request and then the second request is a POST.

If you can see the amount of data transferred, then you can have some more indication that double the requests are being sent and quantity the bandwidth impact at least.

They’ll all POST requests. I trimmed it out of the log for space, but the first 6 requests on the video looked like (nginx shows the data amount for GET, but not POST):

ip.address - - [07/Apr/2024:23:18:44 +0000] "POST /inbox HTTP/1.1" 200 0 "-" "Lemmy/0.19.3; +https://lemmy.world" ip.address- - [07/Apr/2024:23:18:44 +0000] "POST /inbox HTTP/1.1" 200 0 "-" "Lemmy/0.19.3; +https://lemmy.world" ip.address - - [07/Apr/2024:23:19:14 +0000] "POST /inbox HTTP/1.1" 200 0 "-" "Lemmy/0.19.3; +https://lemmy.world" ip.address - - [07/Apr/2024:23:19:14 +0000] "POST /inbox HTTP/1.1" 200 0 "-" "Lemmy/0.19.3; +https://lemmy.world" ip.address - - [07/Apr/2024:23:19:44 +0000] "POST /inbox HTTP/1.1" 200 0 "-" "Lemmy/0.19.3; +https://lemmy.world" ip.address - - [07/Apr/2024:23:19:44 +0000] "POST /inbox HTTP/1.1" 200 0 "-" "Lemmy/0.19.3; +https://lemmy.world"If I was running Lemmy, every second line would say 400, from it rejecting it as a duplicate. In terms of bandwidth, every line represents a full JSON, so I guess it’s about 2K minimum for the standard cruft, plus however much for the actual contents of comment (the comment replying to this would’ve been 8K)

My server just took the requests and dumped the bodies out to a file, and then a script was outputting the object.id, object.type and object.actor into /tmp/demo.txt (which is another confirmation that they were POST requests, of course)

If the first one is OPTION, would that be a bug? Would the right design principle be to do it once per endpoint and then cache it for future requests?

I’m really curious cause I don’t know how this usually works…

That’s pretty standard with most libraries

I’ve never really seen this in (Java/Rust/PHP) backend personally, only in client-side JS (the CORS preflight).

It’s a security feature for browsers doing calls (checking the CORS headers before actually calling the endpoint), but for backends the only place it makes sense is if you’re implementing something like webhooks, to validate the (user submitted) endpoint.

I wonder if the legacy webhooks implementation in Lemmy has left some artifacts that show up when the services that comprise Lemmy are split up as they are for larger instances.

This is pure speculation.

Ok so my assumptions were right. Interesting…

Thanks for sharing !

Is it a misconfiguration or a software bug?

When I’ve mentioned this issue to admins at lemmy.ca and endlesstalk.org (relevant posts here and here), they’ve suggested it’s a misconfiguration. When I said the same to lemmy.world admins (relevant comment here), they also suggested it was misconfig. I mentioned it again recently on the LW channel, and it was only then was Lemmy itself proposed as a problem. It happens on plenty of servers, but not all of them, so I don’t know where the fault lies.

Could it be something to do with an instance running multiple servers, i.e. for horizontal scaling? Maybe there’s some configuration problem where two servers are sending out the federation rather than just one.

Obviously if you only run one lemmy process (which I think most smaller instances do) then it’s not a problem. But if you run more (which I’d assume lemmy.world does) then it could perhaps be the reason.

EDIT: Probably should’ve checked your links first as that seems to be exactly what is happening lol

I’m only running one process, I’d assume the problem isn’t happening for Feddit.dk.

Sounds like there might be a bug in Lemmy then. Please open an issue in the Lemmy repo.

Yeah, that’s the conclusion I came away with from the lemmy.ca and endlesstalk.org chats. That’s it due to multiple docker containers. In the LW Matrix room though, an admin said he saw one container send the same activity out 3 times. Also, LW were presumably running multiple containers with 0.18.5, when it didn’t happen, so it maybe that multiple containers is only part of the problem.

I’m not surprised that it changed from 0.18.5 as the way that you spread the federation queue out changed significantly from that version to 0.19.

All the information is here but if you just run the same configuration as before 0.19, then you will have trouble.

I see the most duplicated activities from programming.dev and mander.xyz, but it happens a lot.

I’ve been coerced into reporting it as bug in Lemmy itself - perhaps you could add your own observations here so I seem like less of a crank. Thanks.

It’s probably the option call before the actual http call .

https://developer.mozilla.org/en-US/docs/Web/HTTP/Methods/OPTIONS

Don’t think it’s a big unless you can prove it’s the same method

We were typing at the same time, it seems. I’ve included more info in a comment above, showing that they were POST requests.

Also, the green terminal is outputting part of the body of for each request, to demonstrate. If they weren’t POST requests to /inbox, my server wouldn’t have even picked up them.

EDIT: by ‘server’ I mean the back-end one, the one nginx is reverse-proxying to.

I’m curious why there isn’t (as far as I’m aware at the moment) to prohibit the ability to respond to a post 3+ years ago

Why would there be? Old threads can be very useful after years and discussion can continue especially with software related threads. I find lots of bug fixes for stuff on reddit years ago that gets updated by a single person posting years later with a fix for something on the subreddits that dont annoyingly auto archive “old” posts

Being able to respond to old posts is a good thing, like classic forums. I always hated that Reddit didn’t allow you to do that, and Reddit also didn’t have sort options for New Comments or Active.

Imagine if someone made a post about a tech issue, it ranked high on Google results, lots of people in the comments with the same issue, and you found the solution, but the post was too old to reply to.